- Arbor Networks - DDoS Experts

- DDoS

Remembering SQL Slammer

A 20-Year Retrospective

Executive Summary

Twenty years ago, the internet came as close to a total meltdown as we’ve seen since its commercialization in the 1990s. A UDP network worm payload of just 376 bytes, targeting UDP destination port 1434, aggressively propagated to all vulnerable, internet-connected Microsoft SQL Server hosts worldwide within a matter of minutes. Popularly known as the SQL Slammer (though the name Sapphire was suggested within the academic community, it didn't catch on) worm, it infected ~75K vulnerable servers worldwide. The significant disruption it caused made international news. It was enough to bring many networks to a screeching halt, and disrupted retail credit card point-of-sale systems and ATMs worldwide (as early as 2003, many of these systems were already dependent upon internet connectivity to function).

SQL Slammer was the latest in a series of aggressively-propagating internet worms such as CodeRed and NIMDA, which were intended to compromise vulnerable computers and subsume them into botnets which could be used to send spam email, propagate malware, and launch DDoS attacks. Like the original Morris internet Worm of 1988, the authors of these newer worms didn’t seem to realize that designing these worms to propagate at network line rates would result in an inadvertent DDoS attack against the networks to which they were connected - thus defeating the purpose of the worm, which was to compromise as many systems as possible.

Disassembly of the SQL Slammer executable revealed that its release was almost certainly accidental; it appeared that the worm’s author was in the process of optimizing its pseudo-random network propagation algorithm, and probably intended to incorporate a reasonable propagation delay later in the development process. It was theorized that the worm author (who was, we should remember, a criminal seeking to illegally compromise vulnerable servers for nefarious ends) accidentally cross-connected a private development network to an internet-connected network, and that a copy of the worm running in the development environment was able to escape into the wild and begin spreading across the global internet at record speed, wreaking widespread havoc along the way.

In many ways, SQL Slammer’s unintended collateral damage constituted a universal DDoS attack which many security operations specialists had theorized would someday occur. In other words, disruptive internet traffic aimed not at any specific hosts or networks, but rather a DDoS attack free-for-all.

By 2003, a relatively small cadre of security-focused participants within the internet operational community had already accumulated a significant amount of experience and expertise in dealing with the emerging threat of deliberate DDoS attacks. Many of the principles and best current practices (BCPs) which those practitioners were in the process of formulating and evangelizing served the internet in good stead when SQL Slammer burst onto the scene. Netscout Arbor Sightline (known as Peakflow SP, at the time) was key to network operators around the world rapidly understanding what was taking place, and then working in concert to stem the tide of disruptive traffic and restore internet connectivity across the globe.

Background

We should consider the 2003 internet in its historical context. Internet security was not as universally viewed with the importance it's accorded today. Network engineers were regularly butting heads with security specialists. The network packet-pushers were generally opposed to most network-based traffic mangling and middlebox devices; the security crowd, especially in campus and enterprise environments, generally favored some sort of permissions-based architecture, where imperfect protections and stateful centralization at aggregation points generally ignored the potential problem of collateral damage due to state exhaustion and overly restrictive network access policies.

The network engineers argued that the security threats of the day were generally best addressed as close to the origins of problematic traffic as possible, which usually meant a focus on better host and application security practices. However, the security specialists countered that this was impractical, and that more restrictive network access control policies were the answer.

Further, the network engineers insisted that implementing and keeping intricate policies updated at scale simply wasn’t possible, and that cluttering the internet would result in unreliable connectivity and the eventual convergence of all traffic being encrypted, resulting in even greater security challenges. Two decades on, it’s apparent that both groups had a point.

The most popular operating systems on the internet at this time were Windows 2000 and Windows XP. Millions of these systems were connected to the internet, but many had no network-based protections shielding them from hostile traffic, and most were rarely (if ever) patched. Practically all these systems had relatively weak host-based protection against remote exploits. Windows XP's basic firewall (packet filtering) capability that existed at the time was not enabled by default (this would come later, with Windows Service Pack 2, in 2004).

Meanwhile, the internet bandwidth and throughput available to end-hosts was increasing dramatically. The standard LAN-based connection speed in 2003 was 100mb/sec switched Ethernet, which was usually only a single order of magnitude slower than many organizations’ entire internet transit capacity. This means that it would only take a small number of hosts to saturate many ISP peering and backbone links, not to mention enterprise internet transit links. Home users around the world were also moving from dial-up to higher-speed, always-on cable modem and DSL networks. A relatively small number of hosts on a few access networks at this time were a potent weapon for a DDoS, or perhaps a high-speed spreading worm. To illustrate this point, it’s worth noting that only a few thousand DDoS-capable bots were able to render some DNS root server instances inaccessible in a deliberate DDoS attack in 2007.

The Exploit and The Worm

In the summer of 2002, multiple problems with Microsoft SQL server were publicly disclosed to the BUGTRAQ mailing list, along with a coordinated patch release from Microsoft. One of the vulnerabilities resulted from buffer overflow that could be triggered with just a few bytes to the service over the network using a small UDP message.

Unfortunately, the patch released by Microsoft caused problems on various types of production systems. Subsequent patches were issued, and then withdrawn, as it was proving difficult for Microsoft to develop a patch which was universally applicable to vulnerable systems.

In the meantime, on January 25th of 2003, between the hours of 0500 and 0600 UTC (around midnight for most of the continental US), what would become known as the SQL Slammer worm began propagating across the internet to vulnerable systems at great speed. Once a vulnerable server was compromised by the worm, that host would then begin scanning the entire theoretical IPv4 address space trying to further propagate the Slammer worm. This resulted in exponential growth of all-points UDP port 1434 traffic saturating the internet and connected networks. This saturation began filling peering and core links, choking the state-tables of stateful firewalls, and overwhelming the control planes of routers and switches which hadn’t been hardened against such traffic excursions via the aforementioned BCPs for network infrastructure self-protection.

Slammer was based on one of the buffer overflow vulnerabilities for which various patches had been issued by Microsoft, but which caused problems on some production systems. In an article for IEEE Security & Privacy, researchers estimated the worm reached 55 million scans per second and ultimately compromised practically all vulnerable systems connected to the internet — approximately ~75K servers — within only a few minutes after its escape from the worm developer’s lab network.

Infected hosts would immediately begin emitting scanning traffic at near the maximum rate of their network interfaces. On many enterprise and university networks, it took only a handful of infected hosts to completely saturate their external internet connections with scanning traffic, completely cutting them off from the internet

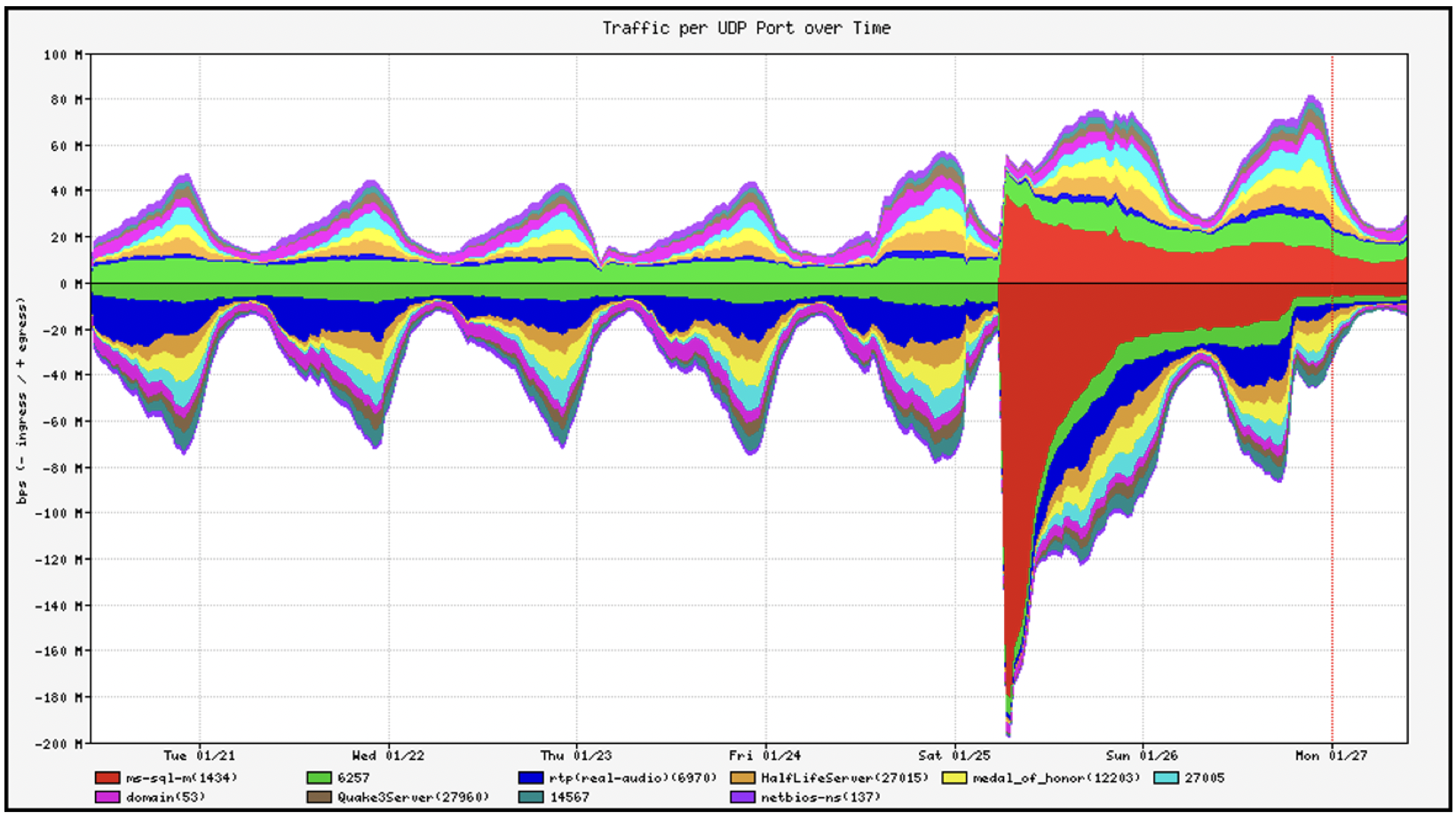

Here’s a graph from a Netscout Arbor Sightline (formerly Peakflow SP) system at the time; the onset of SQL Slammer is readily apparent:

The Response

Even though the worm began spreading quickly outside of working hours, the news and reaction spread almost as quickly. Early reports from network operators identified large-scale internet routing issues caused by peering routers succumbing to the flood of Slammer propagation traffic. Network operators who had access to the type of edge-to-edge network visibility provided by Arbor Sightline were able to rapidly classify the harmful worm propagation traffic as it was immediately detected, classified, and traced back, even though it was essentially minute-zero DDoS attack traffic which had never before caused any operational issues. The consensus recommendation was to immediately filter all traffic destined for UDP port 1434 traffic at all network edges.

There were widespread reports of network instability and availability problems for many hours until UDP port 1434 network packet filters were widely deployed, and infected hosts were quarantined. A few networks had filtered UDP destination port 1434 prior to the worm’s arrival, but this was not very common. Many large network backbones with an abundance of capacity weathered the storm, but because many of them also continued forwarding packets during the propagation event, it might be argued they didn’t really do the smaller ISP and enterprise networks that connected to them any favors. Many network operators rejected — and still reject — calls to perform across-the-board internet traffic filtering. To paraphrase a common refrain from provider networks, “Our job is to move packets received towards the destination as efficiently as possible. We are not internet firewall providers.” Nonetheless, in extreme situations such as this, many large networks did apply temporary UDP port 1434 filters at all their edges, providing much-needed relief from the tsunami of SQL Slammer traffic to their peers and customers. Ultimately, the internet didn’t completely crash. However, a significant number of large networks melted down, at least briefly. We haven't seen another event of this magnitude since.

Fallout

Perhaps due to the severe and widespread losses of availability caused by SQL Slammer, many of those original UDP port 1434 filters persist to this day.

But do we still see SQL Slammer attempting to propagate in the wild? There doesn’t seem to be any evidence it is still running anywhere on the internet, to any significant degree. Over the last few weeks, we’ve been running a packet capture on approximately 300 widely distributed-systems on the internet looking for evidence of that 376-byte UDP payload destined for UDP port 1434. Not a single Slammer payload ever arrived at any of the hosts participating in the experiment.

It’s theoretically possible there are still a few woefully outdated, isolated, Slammer-infected systems jabbering away on private enterprise networks behind temporary UDP port 1434 filters. Ultimately, it’s probably safe to conclude that for all practical purposes, SQL Slammer has finally passed on to that Great Honeypot in the Sky.

Although it’s more than 20 years after the Slammer event, with no visible evidence of even a single lingering infection on today’s internet, we can still observe some of those temporary UDP port 1434 filters in action. For example, from outside a university campus network as of this writing, the following works:

- dig -b0.0.0.0#1433 @internehost.example.edu. ns example.edu.

But this does not:

- dig -b0.0.0.0#1434 @internethost.example.edu. ns example.edu.

A Microsoft SQL Server reflection/amplification DDoS vector on UDP port 1434 was discovered and began being abused a few years ago and was immediately abused by attackers. Sightline/TMS and AED customers are protected from these attacks via AIF Filter lists and evaluative countermeasures, but there were also attempts to abuse this particular DDoS vector inhibited by those legacy UDP port 1434 filters from 2003.

In order to protect their network and internet connectivity during the SQL Slammer event, that same university deployed network access control policies that they initially dubbed shielding filters, but would later be referred to as network seatbelts; a version of these filters are still active on that campus network to this day.

The idea was to apply a set of filters and rate-limits on the first-hop router interface where client end-systems reside. So, for example, all 100mb/sec router interfaces would allow only 10mb/sec of aggregate UDP traffic into the network that was destined to external networks. Any matching traffic above that rate would be undesirable, because practically all client applications would never do such a thing (not over UDP, anyway).

This approach worked quite well for a long time, as long as it was implemented and maintained properly (sadly, this was not always the case). The university did not block UDP port 1434 outright so as to avoid collateral impact, but if a Slammer-infected host bypassed any local host security protections and appeared on their network, the scanning traffic would be limited and was easily identified when the edge network rate limit was reached.

Another action taken after the global Slammer incident was to enhance IP multicast protections (which is mainly of historic interest, these days). It turned out that SQL Slammer would scan the entire IP theoretical IPv4 address space — including 224/4, which was reserved for multicast use. Due to the way IP multicast networking was commonly configured, sending lots of traffic to IP multicast destinations could wreak havoc on multicast-enabled edge routers, unless they were protected with specific BCP network infrastructure self-protection mechanisms.

Conclusion

Could this same sort all-against-all type of DDoS-like traffic excursion happen again? Anything is possible, but it seems unlikely we’d experience anything quite like we did with Slammer. After the original Morris Internet worm, CodeRED, NIMDA, SQL Slammer, Blaster, Nachi, and a few other turbo-worms, most of the criminals creating the worms learned to include delay loops in their worm propagation code, so that the mere act of the worm spreading didn’t in and of itself constitute a DDoS attack.

On the other hand, we’ve seen extremely aggressive Mirai DDoS bot propagation on broadband access networks cause outages for more than one million broadband customers in a single incident. The outage was due to aggressive scanning by Mirai variants for more vulnerable systems to subsume and a failure to deploy network infrastructure self-protection BCPs. One can certainly envision an entirely new class of extremely ubiquitous, vulnerable IoT device which could be exploited by another hyper-aggressive internet worm.

The good news is that the operational community as a whole have learned some valuable lessons about the importance of detecting, classifying, and tracing back minute-zero DDoS attack traffic (deliberate or inadvertent) with Sightline. Combined with the ability to rapidly mitigate such attack traffic with TMS and AED, the operational community has become efficient at deploying network infrastructure BCPs to ensure the resilience of the network, even under conditions of complete capacity overload.

- Arbor Networks - DDoS Experts

- Attacks and DDoS Attacks